The EU AI Act never mentions an “AI inventory” by name. But without one, compliance is practically impossible. Here is what you need to know and how to get started.

Here is a question every executive should be able to answer: How many AI systems are operating in your organisation right now?

If the answer is “I’m not sure,” you are not alone. But you are exposed.

AI is no longer a discrete technology decision. It is embedded in the software your teams already use, woven into vendor platforms, and adopted informally through dozens of small experiments across every function. CRM tools run AI-driven predictions. HR platforms screen candidates with machine learning. Customer support chatbots handle queries autonomously. Marketing automation tools optimise campaigns in real time. Developers integrate large language model APIs into internal workflows.

Most of this happens without a single formal decision that “we are deploying AI.”

And that is exactly where the risk begins. Without a structured AI inventory, organisations have no way to assess what they are using, who is responsible, or whether they are compliant with emerging regulation.

Content

1. The Shadow AI Problem

The term “shadow IT” has been in the governance lexicon for over a decade. Shadow AI is its faster, more consequential successor. As our guide to AI literacy explains, employees across sectors are increasingly utilising AI tools informally, often without established governance frameworks.

When employees adopt generative AI tools to write emails, summarise documents, or build prototypes, they are making decisions about data handling, intellectual property, and output reliability, often without realising it. When vendors introduce AI-powered features inside existing platforms, the risk profile of a tool your organisation already approved can change overnight, without your compliance, security, or legal teams ever being notified.

The result is a growing gap between what leadership believes is happening and what is actually happening. Compliance teams cannot assess obligations they do not know exist. Security teams cannot evaluate threat surfaces they cannot see. Legal teams cannot classify risk for systems they have never reviewed.

This is not a hypothetical problem. It is the current state of most enterprises. That is why AI inventory management has become an operational necessity, not an administrative nice-to-have.

2. The Regulatory Landscape Has Shifted

For years, AI governance was treated as aspirational: something forward-thinking companies pursued voluntarily. That era is over.

2.1 The EU AI Act

The EU AI Act represents the world’s first comprehensive regulatory framework for artificial intelligence. With most substantive obligations beginning to apply from 2 August 2026 and phased implementation depending on risk level and use case, the regulation introduces a risk-based classification system that categorises AI systems as unacceptable, high, limited, or minimal risk.

For high-risk AI systems, the obligations are substantial: technical documentation, conformity assessments, human oversight mechanisms, risk management processes, data governance controls, logging and traceability, and fundamental rights impact assessments. Providers and deployers alike carry specific responsibilities.

The core requirement is straightforward: organisations must know what AI they use, classify it appropriately, and demonstrate compliance. Failure to do so can result in significant penalties, up to €35 million or 7% of global annual turnover.

2.2 ISO/IEC 42001

Running in parallel with regulatory mandates, ISO/IEC 42001 establishes the first international standard for AI Management Systems (AIMS). While certification is voluntary, it is rapidly becoming a market-driven expectation, much as ISO 27001 became the de facto standard for information security management.

ISO 42001 addresses the operational dimensions of responsible AI: risk management, data governance, human oversight, monitoring and measurement, internal audits, management review, and continuous improvement. Where the EU AI Act defines what the law requires, ISO 42001 provides a structured management system for how to meet those requirements sustainably.

One is regulatory compliance. The other is operational excellence. Together, they define the new baseline that boards, clients, and partners will increasingly demand.

3. The Foundation: Build an AI Inventory

Before you draft policy. Before you score risk. Before you pursue certification. You need one thing: visibility.

An AI inventory is the foundational artefact of any credible AI governance programme. It is a structured, maintained register of every AI system your organisation develops, deploys, procures, or interacts with, including those embedded in third-party tools and vendor platforms.

Without an AI inventory, compliance is guesswork. With one, governance becomes operational. Effective AI inventory management is the difference between an organisation that can answer a regulator’s questions instantly and one that scrambles to piece together fragmented information from across departments.

4. The GDPR Parallel: Your ROPA for AI

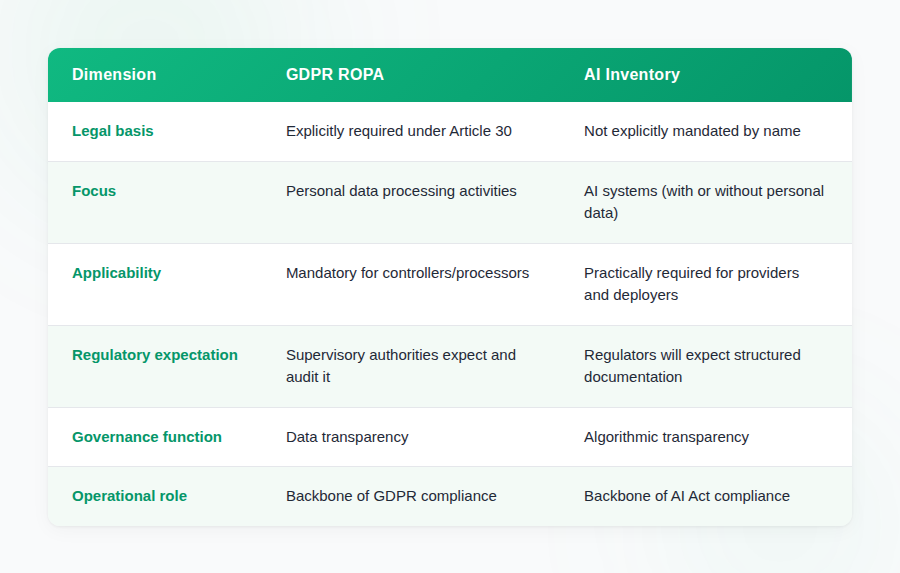

If your organisation has been through GDPR compliance, the concept of an AI inventory will feel familiar. Under GDPR, Article 30 explicitly requires controllers and processors to maintain a Record of Processing Activities: the ROPA. It became the operational backbone of data privacy compliance across Europe and beyond. The UK retains a parallel obligation through the UK GDPR, meaning organisations operating in the United Kingdom face the same record-keeping expectations.

The EU AI Act does not contain an equivalent provision. There is no single article that says “providers and deployers shall maintain a register of AI systems.” The term “AI inventory” does not appear in the regulation.

But the parallel is instructive, and the practical outcome is the same. GDPR created ROPA as the mechanism for data transparency. The EU AI Act creates the conditions that make an AI inventory the mechanism for algorithmic transparency. One is explicitly mandated. The other is structurally required. Both are essential for compliance.

The lesson from GDPR is clear: organisations that built their ROPA early had a structural advantage when enforcement began. The same dynamic is now unfolding with AI governance. Organisations that build their AI inventory now, before enforcement pressure intensifies, will be in a fundamentally stronger position.

5. The Legal Case: Why an AI Inventory Is Structurally Required

One of the most common misconceptions about the EU AI Act is that it explicitly mandates an “AI inventory.” It does not. Not in those words. But when you examine the obligations the regulation does impose, the conclusion is inescapable: maintaining a structured internal register of AI systems is a practical necessity for compliance. Here is why, article by article.

Article 9 — Risk Management System

Article 9 requires providers of high-risk AI systems to establish, implement, document, and maintain a risk management system throughout the entire lifecycle of the AI system. This is not a one-time assessment; it is an ongoing obligation. You cannot implement or document risk management without knowing:

- Which AI systems exist within your organisation

- What their intended purpose is

- How they are classified under the regulation’s risk framework

- What data inputs and outputs they rely on

An AI inventory is a practical precondition for compliance with Article 9. You cannot manage risk for systems you have not identified.

Article 11 — Technical Documentation

Article 11 requires providers to draw up and maintain technical documentation demonstrating compliance. It must be prepared before the system is placed on the market and kept up to date. The required documentation spans:

- System design, development methodology, and architecture

- Risk management processes and outcomes

- Data governance practices, including training data characteristics

- Human oversight mechanisms and their implementation

- Performance metrics, testing results, and validation

To manage this documentation across multiple AI systems, organisations must track those systems in a structured, centralised way. Without an AI inventory, technical documentation becomes fragmented and impossible to audit.

Article 12 — Record-Keeping and Logging

High-risk AI systems must be designed with automatic logging capabilities to ensure traceability, facilitate post-market monitoring, and support regulatory oversight. Organisations must:

- Retain logs for the required periods

- Ensure traceability of system decisions and outputs

- Enable regulatory authorities to access and review records

You cannot manage logging and traceability for AI systems that are not registered in a central record. Article 12 compliance presupposes that you know what systems generate logs, where they are stored, and who is responsible.

Article 14 — Human Oversight

High-risk AI systems must be designed with appropriate human oversight during the period the system is in use. This demands that organisations actively identify where oversight is needed, who provides it, and how it is implemented:

- Identify which AI systems require human oversight based on risk classification

- Assign specific individuals or roles responsible for exercising oversight

- Track the implementation and effectiveness of oversight mechanisms over time

This functionally requires an internal registry. Without one, there is no systematic way to ensure every high-risk system has appropriate, assigned, and documented human oversight.

Article 29 — Obligations of Deployers of High-Risk AI Systems

Article 29 shifts the focus to deployers: organisations that use high-risk AI systems, even if they did not develop them. Deployers must:

- Use AI systems in accordance with the provider’s instructions

- Monitor the operation of the AI system for potential risks

- Retain logs generated by the system, where applicable

- Ensure that human oversight is in place as required

- Conduct fundamental rights impact assessments for certain use cases

To meet these deployer obligations, organisations need structured knowledge of every AI system they have deployed. An AI inventory is the mechanism that makes this possible.

Article 72 — EU Database for High-Risk AI Systems

Providers and, in certain cases, deployers must register high-risk AI systems in the EU database before placing them on the market. This external registration creates an immediate internal prerequisite:

- Identify which of your AI systems qualify as high-risk

- Determine which systems require registration

- Prepare required information including intended purpose, risk classification, and conformity assessment status

Before you can register externally, you must know internally what qualifies. An AI inventory is the bridge between your internal governance and the EU’s external registration requirements.

The most sustainable approach is: one core privacy program + jurisdiction overlays.

⚠️ Important Legal Framing

While the EU AI Act does not explicitly use the term “AI inventory,” compliance with Articles 9, 11, 12, 14, 29, and 72 makes maintaining a structured internal register of AI systems a practical necessity.

The inventory is not named in the regulation. But it is structurally required by the regulation’s obligations.

6. What Should an AI Inventory Include?

A comprehensive AI inventory must serve two masters: EU AI Act compliance and ISO/IEC 42001 management system alignment. It must also integrate data governance and personal data protection as a central pillar, not an afterthought.

A. Core Identification Fields

Every AI system in the inventory must be uniquely identifiable and contextualised:

- AI system name and unique system identifier

- Description of the system and its intended purpose

- Business function and deploying department

- Deployment status: development, testing, or production

- Provider or vendor: internal development or external procurement

- System owner and responsible department

B. EU AI Act-Specific Fields

Risk Classification

Every AI system must be classified under the Act’s four-tier risk framework: unacceptable, high, limited, or minimal risk. For high-risk systems, the inventory should capture the specific category: biometric identification, employment, education, essential services, law enforcement, migration, justice, or critical infrastructure.

Conformity and Documentation

Whether a conformity assessment is required

Whether CE marking is required

Technical documentation availability and status

EU database registration status, where applicable

Human Oversight

Description of the human oversight mechanism in place

The individual or role responsible for oversight

The escalation pathway for issues or anomalies

Logging and Traceability

Whether logging is enabled

Log retention period

The individual or team responsible for monitoring

C. Data Governance and Personal Data Protection

AI governance without data governance is incomplete.

Under both the EU AI Act and GDPR, organisations must document the data dimensions of every AI system. An AI inventory that ignores personal data processing is a liability, not an asset.

- Whether personal data is processed

- Whether special category data is processed

- Categories of data subjects and data sources

- Lawful basis under GDPR, if applicable

- Data quality and representativeness measures

- Bias mitigation and data minimisation measures

- Storage limitation policies and security controls

- Whether a DPIA or Fundamental Rights Impact Assessment is required

D. ISO/IEC 42001 Alignment Fields

ISO 42001 expands from system-level compliance to organisational governance maturity. An inventory aligned with the standard should also track:

- Inclusion within the AI Management System (AIMS) scope

- Whether a risk assessment has been conducted

- Linked AI policy reference

- Operational controls implemented

- Monitoring and measurement processes defined

- Whether internal audit and management review have been completed

- Continual improvement actions logged

ISO 42001 focuses on management system maturity, not only regulatory compliance. A mature AI inventory management programme serves both the EU AI Act and ISO 42001 simultaneously.

7. The Strategic Reality: What Regulators Will Actually Ask

Regulatory frameworks describe obligations. But enforcement is driven by questions. And the questions regulators will ask are straightforward:

“Which AI systems do you use?”

“Which are high-risk?”

“Show your risk management documentation.”

“Show your human oversight structure.”

“Show your technical documentation.”

“Show your logging and traceability records.”

If your organisation’s answer is “We think we have a few…” that will not be defensible. Not before a regulator. Not before a board. Not before a client conducting due diligence.

The organisations that will navigate this regulatory environment successfully are those that can answer these questions instantly, with structured evidence. An AI inventory is not an administrative exercise. It is the operational backbone of compliance: the single artefact that connects risk management, technical documentation, human oversight, logging, and data governance into a coherent, auditable whole.

8. Why Spreadsheets Are Not Enough

Most organisations begin their AI inventory in Excel. That is understandable, and it is a better starting point than having nothing at all.

But spreadsheets introduce structural limitations that become liabilities as your AI footprint grows. They depend on manual updates, offer no real-time monitoring, provide no automated risk classification, generate no audit trail, lack cross-functional visibility, do not integrate with vendor management workflows, have no enforced version control, and cannot produce automated reporting.

In short, you are tracking AI at human speed. AI evolves at machine speed.

When a vendor updates its platform to include a new AI feature, your spreadsheet does not update itself. When a team experiments with a new model, no row appears automatically. When a risk classification changes because of a regulatory update, there is no alert. The inventory drifts from reality with every passing week, and with it, your compliance posture.

9. The Case for AI Inventory Management Software

An automated AI inventory management software solution transforms governance from static documentation into living operational infrastructure. The advantages are not incremental; they are structural.

A centralised AI system registry provides a single source of truth accessible to every stakeholder. Structured risk classification aligned with the EU AI Act eliminates ambiguity. Built-in ISO 42001 alignment ensures your inventory serves both regulatory and certification objectives. Vendor AI tracking links procurement decisions to governance obligations. Personal data and GDPR mapping connects AI risk to data protection requirements. Automated compliance status tracking surfaces gaps before auditors do. Review workflows and ownership assignment create accountability. Version control and audit trails provide the evidentiary record regulators expect. Dashboard-level reporting gives boards the visibility they need to govern effectively.

The shift is from asking “Where are we using AI?” to answering that question instantly, with evidence. Whisperly delivers exactly this: end-to-end AI inventory management with AI intake, approvals, centralised inventory, automated compliance checks, fully aligned with the EU AI Act and ISO 42001.

10. Start Now, Scale Later

Not every organisation is ready to automate immediately, and that is fine. The critical step is to start.

Free AI Inventory Template — Get Started Today

We provide a free EU AI Act AI Inventory Template designed to help you take the first structured step toward compliance. The template includes:

- EU AI Act risk classification fields aligned with Articles 9, 11, 12, 14, 29, and 72

- Complete data governance and GDPR mapping

- ISO/IEC 42001 alignment fields for management system integration

- Core identification fields: system name, purpose, owner, department, and deployment status

- Human oversight tracking and conformity assessment status

- Vendor tracking and due diligence mapping

- Logging and traceability fields

- Dashboard reporting structure

Start tracking every AI system you use, before regulators do

Begin with the template. Map every AI system you can identify. Assign ownership. Classify risk. Document data flows. Then build the discipline of maintaining and reviewing that AI inventory on a regular cadence.

But do not stay in the spreadsheet. As your AI footprint grows (and it will) the gap between what your inventory reflects and what is actually happening will widen. The organisations that invest in AI inventory management software early will have a compounding advantage in compliance readiness, operational efficiency, and stakeholder trust.

AI governance is not a future concern. It is operational infrastructure that starts with a single, foundational step: knowing what you have.

11. Further Reading on Whisperly

- EU AI Act Summary — A comprehensive overview of the regulation

- EU AI Act Guidebook — Step-by-step compliance guidance

- High-Risk AI Systems — Understanding classification and obligations

- EU AI Act Prohibited Practices — What is banned under Article 5

- EU AI Act Penalties — Fines and enforcement consequences

- ISO 42001 Guidebook — Certification roadmap and requirements

- AI Governance — Strategic overview for C-level leaders

- Responsible AI — Building trust through ethical AI practices

- AI Literacy — Meeting Article 4 training obligations

- General-Purpose AI (GPAI) — Regulation of foundation models

- GPAI Training Transparency Template — Documentation requirements

- Authorized Representative — Requirements for non-EU providers

- EU AI Act Compliance Checker — Assess your obligations

12. Questions & Answers

What exactly is an AI inventory, and why does it matter?

An AI inventory is a structured register of every AI system your organisation develops, deploys, procures, or interacts with. It matters because you cannot govern what you cannot see. The EU AI Act requires documentation, risk management, and oversight for AI systems, particularly those classified as high-risk. An inventory provides the foundational visibility needed to assess obligations, classify risk, assign accountability, and demonstrate compliance. Without one, any AI governance effort is built on assumptions rather than evidence.

Does the EU AI Act explicitly require an AI inventory?

Not in those exact words. Unlike GDPR, which explicitly requires a Record of Processing Activities under Article 30, the EU AI Act does not mandate an “AI register.” However, compliance with Articles 9 (risk management), 11 (technical documentation), 12 (record-keeping), 14 (human oversight), 29 (deployer obligations), and 72 (EU database registration) makes maintaining a structured internal register a practical necessity. The inventory is not named in the regulation, but it is structurally required by its obligations.

How does an AI inventory compare to the GDPR ROPA?

The ROPA under GDPR Article 30 is an explicitly mandated register of personal data processing activities. An AI inventory serves a parallel function for AI governance: it is the structured register that makes compliance operationally possible. ROPA provides data transparency; an AI inventory provides algorithmic transparency. Both are essential governance artefacts, even though only ROPA is explicitly named in law.

Does the EU AI Act apply to organisations outside the EU?

Yes, in many cases. The EU AI Act has extraterritorial reach. If your AI system’s output is used within the European Union, the regulation can apply regardless of where your organisation is headquartered. Non-EU providers of high-risk AI systems must also appoint an Authorized Representative within the EU. Any organisation that places AI systems on the EU market should assess its obligations under the Act.

What is the difference between the EU AI Act and ISO/IEC 42001?

The EU AI Act is law: mandatory, enforceable, and carrying penalties for non-compliance. ISO 42001 is a voluntary certification standard for AI management systems. The EU AI Act defines what you must do; ISO 42001 provides a structured framework for how to do it sustainably. In practice, the two are complementary.

What is shadow AI, and how do I detect it?

Shadow AI refers to AI systems operating without formal approval or oversight. This includes generative AI tools adopted by employees, AI-powered features embedded in vendor platforms, and API integrations built outside formal procurement. Detecting it requires surveying teams, auditing vendor contracts, reviewing API usage logs, and establishing a culture where teams register AI usage proactively. Building AI literacy across your organisation is a critical enabler of shadow AI detection.

Why is data governance central to AI inventory management?

AI systems are fundamentally dependent on data. The EU AI Act requires documentation of data governance practices, and GDPR obligations apply whenever personal data is involved. An AI inventory that does not map data flows, document lawful basis, or track bias mitigation measures is incomplete. Organisations should treat their AI inventory and data governance framework as interconnected systems.

What are the penalties for non-compliance?

The EU AI Act penalty structure is tiered. Fines for prohibited AI practices can reach €35 million or 7% of global annual turnover. Non-compliance with high-risk requirements can result in fines of €15 million or 3%. Providing misleading information can incur fines of €7.5 million or 1%. The cost of non-compliance far exceeds the cost of structured AI inventory management.

Can I start with a spreadsheet?

Absolutely, and we encourage it. Our free AI Inventory template is designed for exactly this purpose, covering EU AI Act risk classification, ISO 42001 alignment, data governance mapping, vendor tracking, and all core compliance fields. It provides meaningful value from day one. As your footprint grows, plan to transition to AI inventory management software like Whisperly that can scale with your needs.

Who should own the AI inventory?

Ownership typically sits with the function responsible for AI governance, whether that is the CIO, CTO, Chief Compliance Officer, Data Protection Officer, or a dedicated AI governance lead. What matters most is clear ownership, cross-functional authority, and integration into executive-level reporting.

How often should an AI inventory be updated?

Treat it as a living document. Review quarterly at minimum; update continuously as new systems are deployed, vendor features change, or regulations evolve. Automated AI inventory management software makes continuous maintenance feasible. With manual processes, establish a regular cadence and assign clear ownership.

Where can I get started today?

Download our free AI Inventory template from Whisperly. It gives you a structured starting point aligned with the EU AI Act and ISO 42001. You do not need specialised software to begin, but you do need to begin. The organisations that build visibility now will be best positioned when enforcement begins.

Take the Fastest Path to

Audit-Ready Compliance

Build trust, stay on top of your game and comply at a fraction of a cost